PCI-passthrough: Windows gaming... on Linux

Motivation

I am not a gamer by any mean. However, I do enjoy gaming once in a while, and always find it to be a fun activity when having friends over. On the same note however, I am an ardent Linux user, and although gaming on Linux has been making a lot of strides in recent years (see: Wine, Proton, Vulkan, and Lutris), the truth of the matter is that it sadly still has some way to go before it is as seamless as it currently is on Windows.

The immediate solution to make gaming possible while simultaneously running Linux as a main OS would be to use dual-boot: Having both Linux and Windows installed on separate partitions (or disks) on my system, and simply switching between the two whenever needed. This solution comes with a lot of disadvantages:

- Having to power down my Linux environment every time I wanted to play a game is something I have tried before, and of which I am not a fan. Even resorting to hibernation instead of a complete power-off, was still a turnoff for me.

- As I do not trust Windows with any of my private data, games would be the only data made available on my Windows installation. This hugely limits the usability of my system when booted into Windows (e.g. listening to my music, watching something out of my library on my second monitor, etc).

- I have had my share of Windows installations taking it upon themselves to update my firmware configuration (e.g. by updating the booting entries) or, even worse, fiddling with other disks/partitions they see available on the system.

Due to these reasons, after a few early years of dual-booting Windows with Linux, I simply stopped doing so, and as such am now in need of a different solution. One way to avoid running Windows on my physical machine, would be to simply run it on a… virtual machine! This alternative negates almost all the disadvantages listed above, and comes with all the advantages of virtualization, namely:

- The possibility of creating snapshots of the virtualized system, and ability to revert the system to previous states. Additionally, a virtual system can be cloned, backed up, and loaded as many times as one might need.

- A finer control over the virtualized system’s (Windows) access to physical resources, specifically, storage and network I/O.

In this article, I will attempt to explain the requirements and configuration necessary to achieve such a setup. I will also walk you through some of the issues I have faced when trying to achieve such a configuration on my system.

PCI-passthrough

Although I have presented virtualization as the solution to my “gaming problem”, the truth is slightly more complicated than that. In order to understand why that is, we need to take a deeper look into how GPUs operate in a normal system, and how that changes when moving into a virtualized system.

Direct Memory Access

Gaming relies primarily on 3D acceleration, which is almost always delegated to a discrete GPU. In order to achieve their high performance operation, GPUs need to load a lot of data from system memory (in the form of textures and polygon meshes). This fast loading is accomplished using of a technique called Direct Memory Access (DMA)1. This technique allows the GPU (and more generally, any DMA-capable devices), to directly access main memory without going through the CPU to perform read/write operations.

Direct Memory Access offloads the task of memory access from the CPU and onto a DMA controller. The CPU is thus not fully occupied by the memory access operations, and only needs to be notified once the memory access is finished. As such, high data-rate transfers to and from memory are possible, without blocking the CPU.

DMA is still managed by the CPU, as it is the CPU’s role to control access to the system memory it has access to. Herein lies the problem with attempting to use the same technique in a virtualized system. A virtual machine runs on a virtual CPU which only has access to the virtual memory range assigned to it, and pooled from the physical memory of the host system. The virtual system does not know how its virtual memory maps to the host system’s physical memory. Similarly, a GPU on the host system would also not know about this mapping. If the virtual system attempts to give direct access to its memory range to a host’s GPU, the range being communicated to the GPU will only make sense within the guest’s memory. However, when the GPU attempts to use that range, it will do so when accessing the physical memory. This will result in calls to unexpected memory ranges, and will probably cause memory corruption. This problem can be solved by the hypervisor, wherein it handles translation between the virtual and physical memory ranges for the virtual machine. However, this translation results in delayed memory access, which in the case of 3D acceleration, will cause a big drop in performance.

Thankfully, using hypervisor translation is not the only way to get Direct Memory Access working in a virtualized environment. In order to understand how this other solution works, we need to take a deeper look into how GPUs (and PCI-express devices in general) make use of DMA.

IOMMU

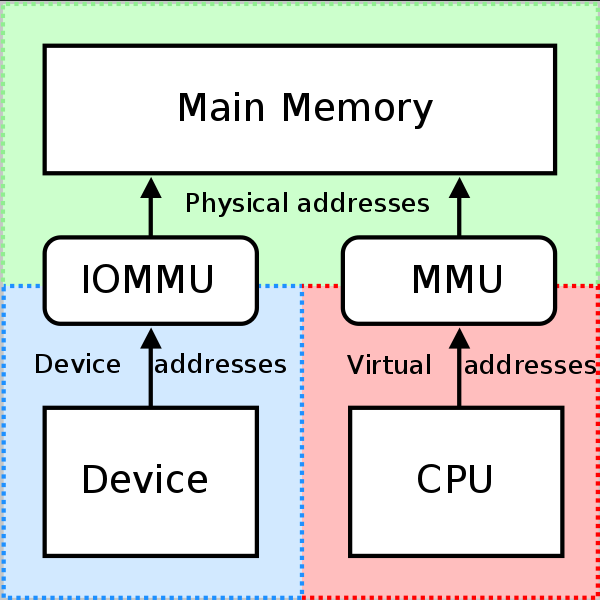

Figure 1: IOMMU and MMU operation

Figure 1: IOMMU and MMU operation

The Input/Output Memory Management Unit (or IOMMU for short), is the component which allows Direct Memory Access capable I/O buses (such as PCIe) to access main memory. An IOMMU creates a virtual memory space and makes it available to the device(s) connected to it. The IOMMU will then handle the remapping of virtual addresses within this virtual space, also known as I/O Virtual Addresses (IOVA) into the system’s physical memory2. This is the same principal through which the Memory Management Unit (MMU) allows the CPU to translate its virtual memory to the system’s physical memory (see Figure 13).

Not all IOMMUs have the same level of functionality, in-fact, the address translation functionality detailed above, is only the classic functionality of IOMMUs. A more modern functionality, which happens to also be essential for virtualization is the isolation functionality. IOMMUs are capable of isolating different PCIe devices into different IOMMU groups. These groups represent the smallest set of devices that can be considered completely isolated from other PCIe devices when considering their DMA calls. This is because devices within one group will share the same IOVA space2.

This isolation capability became widely possibly with the advent of modern PCIe, which tags all transactions of a device with an ID which is unique to it. However, the PCIe specification also allows for transactions to be re-routed within a PCIe interconnect fabric. This means that within the PCIe architecture, a downstream port can re-route the transactions of two other downstream devices, without the need to reach the IOMMU for DMA translation. These transaction are known as peer-to-peer transactions4. IOMMU groups take this into account, and group all devices capable of peer-to-peer transactions, hence why devices within a group are considered completely isolated5.

To summarize, using IOMMU isolation, it is possible to completely isolate DMA calls from all devices within an IOMMU group, and to assign them to a virtual machine’s memory space. Within the virtual machine, the device will act as if physically attached to it, this is what is known as PCI passthrough. Isolating a device is only the first in passing it to a guest system, as one still needs to make sure that the device is correctly initialized and loaded with the appropriate drivers. This is where the next piece of the PCI-passthrough puzzle comes into play.

VFIO

The GPU to be passed to the guest machine is (necessarily) physically attached to the host system. This means that during boot of the host system (before any virtualization is started), the host operating system will detect the GPU and will attempt to bind its drivers to it. The implication being that, when it comes time to pass this GPU to the guest system, it will be necessary to unbind these drivers, and then rebind other drivers which will make the device available to the guest system6.

For a lot of other devices (e.g. USB controllers, Storage drives), driver unbinding and re-binding is not an issue and can be performed after system boot. However, due to the complexity of their drivers, the same does not hold for GPUs, and comes with certain issues6. As such, the alternative is to use stub drivers, which bind to the device early on in the boot process before the host’s drivers have a chance to do so. The Virtual Function Input/Output (or VFIO) driver framework provides exactly such stub drivers.

VFIO is a “secure, userspace driver framework”7. Providing stub-drivers for devices meant to be passed-through to a virtual machine, is only one of the functionalities it makes available. In fact VFIO can decompose a device into a userspace API that allows programs in userspace to configure/program the device, manage its signals and interrupts, and control its data-transfers7. In the context of virtualization an emulator such as QEMU can then make use of this API to reconstruct a device which it can then present to a virtual machine. From the perspective of the VM, it looks as if the device is physically attached to it8.

VFIO allows for near native performance from within the guest system , partly because it provides DMA mapping and isolation using hardware IOMMU. When a device is to be passed through to the guest system, its whole IOMMU group is isolated and assigned VFIO stub-drivers. This isolation allows VFIO to handle translation of all DMA calls and, when used by an emulator/virtualizer, to redirect those calls into the guest system’s memory space. The fact that VFIO operates from within on the Linux kernel (and not from within the hypervisor) also allows for lower latency and better performance.

In summary, PCI-passthrough is achieved through the interworking of the following components:

- IOMMU allows to completely isolate groups of devices on the host system from all other devices.

- VFIO can take one of these isolated groups, and decompose it into an API usable from userspace, with near native performance, thanks to the DMA isolation IOMMU grouping allow.

- QEMU makes use of the VFIO API to present a virtual device to the VM, which maps to the physical device with near native performance.

In the following section we will see what that looks like in a real configuration, namely my own.

The guest GPU

The host system I will be using is the one I have covered through my computer build series. As detailed in part 4, my system only has a single GPU (the CPU has no integrated graphics). In the previous section, we covered how passing a GPU to a virtual machine requires the use of VFIO drivers to prevent the host system from binding its own drivers to it. This effectively makes the GPU unusable by the host system, meaning that a PCI passthrough setup will require two GPUs: One to be used by the host, and the other to be passed to the guest. As such, the first step for me was to add a second GPU to my system.

Figure 2: The PowerColor Red Devil RX 6700XT

Figure 2: The PowerColor Red Devil RX 6700XT

For my guest GPU I decided to go with the PowerColor Red Devil RX 6700XT. I had previously chosen the Fighter RX 6600XT (also from PowerColor) as my host GPU, and found it to be a very quiet and performing card. Since I would use my guest system to do the bulk of my GPU demanding work, I decided to go for a higher range card, hence the 6700XT. Initially, I had in mind to go with an even higher range GPU (a 6800XT). However, 6800XT cards and higher, almost exclusively come in the 3-slot variety. Furthermore, the clearance between my motherboard’s PCI slots and the case, would have caused any such card to sit directly on the power supply shroud, leaving no space for airflow, or I/O cabling. High-frame-rate 4K gaming would be pushing my 6700XT’s to the limit of its abilities (AMD advertises the 6700XT cards as optimal for 1440p gaming). However, that is not an issue as I do not own, nor am not planning on getting a 4K monitor any time soon.

For my setup, I followed the instructions detailed in the Arch Wiki article which covers PCI-passthrough9. The steps of the article mention that a prerequisite on the GPU to be passed is UEFI support in its ROM. This is because the guest system created through the article’s steps is set to use UEFI firmware (OVMF). However, the article also mentions that PCI-passthrough is possible to guest systems that use BIOS firmware (SeaBIOS), but the steps to achieve that are not covered. All GPUs from 2012 and later should have ROM-support for UEFI, and that is also the case for the GPU I chose for my setup.

Before moving forward, I would like to already highlight the fact that I ended up having some issues with the PowerColor Red Devil RX 6700XT. As such, if you are using the information in this article to help guide your decision, then I would recommend reading all the way through the Troubleshooting section where I go more into the details of the issues I faced.

Host setup

I configured my system following the instructions detailed in the Arch Wiki article: PCI passthrough via OVMF. The article is really comprehensive and deeply details all of the steps required to configure a working PCI-passthrough setup, so I can only recommend giving it a read. As the Arch Wiki article already does a good job of it, I will not go into every single step, but cover the parts I found most relevant, and emphasize the article’s explanations with practical output from my system.

IOMMU configuration

As highlighted in the IOMMU section, IOMMU support is a pre-requisite for PCI-passthrough. IOMMU support depends on the CPU, Chipset, and motherboard. As such, this is a consideration I took in mind when choosing my CPU and motherboard (see part 1 of my computer build series). In my case, I am using a Ryzen 9 5900X, which as all AMD processors for the last few generations supports AMD-Vi, which is AMD’s implementation of IOMMU10. The same is true of my motherboard. The MSI MEG X570 Unify I chose does provide support for IOMMU, which was activated by default in its firmware.

Furthermore, an important factor behind my choice of a motherboard was its support of good IOMMU grouping. This is also something we have covered in the IOMMU section. IOMMU groups are the smallest units distinguishable and isolateable by the IOMMU. As such, all devices within the group of the device to be passed will also need to be passed to the guest system. Therefore, it is very important that the motherboard allows for a granular grouping, populating each group with as few devices as possible, thus allowing for maximum flexibility. The Arch Wiki article provides a small bash script that allows to print out all the IOMMU groups exposed by the motherboard, as well as all devices within those groups. The output returned in my case is shown below. I have abbreviated the list of groups to show only those containing my two GPUs.

1

2

3

4

5

6

7

8

9

IOMMU Group 28:

2f:00.0 VGA compatible controller [0300]: Advanced Micro Devices, Inc. [AMD/ATI] Navi 22 [Radeon RX 6700/6700 XT/6750 XT / 6800M] [1002:73df] (rev c1)

IOMMU Group 29:

2f:00.1 Audio device [0403]: Advanced Micro Devices, Inc. [AMD/ATI] Navi 21/23 HDMI/DP Audio Controller [1002:ab28]

[...]

IOMMU Group 32:

32:00.0 VGA compatible controller [0300]: Advanced Micro Devices, Inc. [AMD/ATI] Navi 23 [Radeon RX 6600/6600 XT/6600M] [1002:73ff] (rev c1)

IOMMU Group 33:

32:00.1 Audio device [0403]: Advanced Micro Devices, Inc. [AMD/ATI] Navi 21/23 HDMI/DP Audio Controller [1002:ab28]

As you can see, my motherboard offers good IOMMU grouping. Not only are the GPUs in separate groups each, but the HDMI audio of each card is also isolated into its own group by the IOMMU. This meant that isolating the guest GPU (Group 28 and 29) was straight forward for me, and I could move on to the next step of the configuration, namely assigning VFIO drivers to it.

Please note that, the IOMMU grouping shown above is what my motherboard provides with BIOS version: 7C35vAA. Firmware updates can change this, so even if you are using the same motherboard, you might see a different grouping than mine.

VFIO drivers’ assignment

This step is usually pretty straight forward, as all that is needed is to specify to the kernel that it should use the vfio-pci driver for the devices to be passed through. The devices to be specified in my case are the guest GPU (PCI address 2f:00.0) and its HDMI audio (PCI address 2f:00.1). The default way of assigning the driver to these devices is through the use of their manufacturer ID instead of the PCI address (either using a kernel parameter or by specifying them in the intiramfs image). Looking back through the IOMMU groups output, these IDs would be 1002:73df for the GPU and 1002:ab28 for its HDMI audio.

The keen among you, might have noticed that the manufacturer ID of the HDMI audio of both GPUs is the same. This is not unexpected as both cards are of the same generation and from the same manufacturer. However, this posed an issue as it meant that I could not use the manufacturer ID to specify which device should be bound to the VFIO driver. Thankfully, the article also covers this possibility in the Special procedures section. It is possible to create a bash script that will manually override the driver used for specific devices, and to use the PCI ID (instead of the manufacturer ID) to indicate which device to perform this operation for. This script is then added to the initramfs.

Guest setup

Setting up the guest was also very straight forward, and I only had to follow the instructions in the Arch Wiki article. The summary of the steps to follow is:

- Create a Windows virtual machine using the OVMF firmware. The Open Virtual Machine Firmware (or OVMF) is a sub-project of Intel’s EFI Development Kit II (edk2), and enables UEFI support for Virtual Machines11.

- Remove emulated video output created by default, and pass the PCI device corresponding to the guest GPU to the VM. Additionally, the default emulated keyboard and mouse input needs to be removed and replaced by passed-through keyboard and mouse. This is easily done using

virt-manager’s GUI.

In my case, for the boot drive of my guest system, I used a VirtIO disk image, which allows for improved performance thanks to para-virtualization12. Para-virtualization is its own interesting and rich topic which I might dedicate a future article to. However, in short, para-virtualization operates by presenting a software interface (an API) to the guest system which allows it to offload some critical operations from the virtual domain to the host13. Examples of such critical operations include network operations and block device access8. To make use of para-virtualization, the guest system needs to be modified to make use of the software interface made available by the host system. This can be achieved through the use of specific drivers in the guest system. In my specific case, I needed to install VirtIO drivers to my guest system during the initial setup of the virtual machine14.

Furthermore, I have also passed through a secondary SSD drive, which I had mounted into the second M.2 slot of my motherboard, and one of my USB controllers. Both the M.2 slot and the USB controller were isolated in their own separate groups:

1

2

3

4

5

IOMMU Group 23:

23:00.0 Non-Volatile memory controller [0108]: Samsung Electronics Co Ltd NVMe SSD Controller SM981/PM981/PM983 [144d:a808]

[...]

IOMMU Group 36:

34:00.3 USB controller [0c03]: Advanced Micro Devices, Inc. [AMD] Matisse USB 3.0 Host Controller [1022:149c]

As mentioned in the VFIO section, devices such as drives, and USB controllers do not require a VFIO stub driver, and their drivers can be un-bound and rebound during operation of the host. This meant that I did not need to go through the VFIO drivers’ assignment step as I did for the GPU, and could simply pass them using the GUI in virt-manager.

Looking Glass

The discussion in the previous two sections, covers how to configure GPU passthrough, on the host and guest systems respectively. Using such a configuration allows the guest system to obtain direct control over the passed GPU and to use it for 3D-acceleration. However, this also means that video from the guest system will be output from the guest GPU. Meaning that, in order to view the guest system’s output, an additional monitor needs to be connected to the guest GPU. Alternatively, a multi-input monitor can be used, and its input switched to the guest GPU each time the virtual machine is started. This would be quite annoying to deal with, and thanks to the Looking Glass project I don’t have to go through such a hassle.

Looking Glass is a program which allows to copy the GPU frame-buffer from a Windows guest to a Linux host. This allows to show the video output of the guest system in control of a passed-through GPU within a window in the host system, without the need for an additional monitor (so called headless passthrough)15. This is achieved by creating a shared memory buffer between the guest GPU and host GPU, thus allowing the host GPU to show frames from the guest GPU as soon as they are ready. As such, the latency between the two GPUs is extremely low (in contrast to other technologies such as streaming or remote-desktop). This low latency is what makes such a solution appropriate for high-performance scenarios such as gaming. Finally, Looking Glass makes use of the SPICE protocol in order to provide keyboard and mouse input, and as of version B6, audio input and output too16.

Looking Glass uses a server/client model, with the server residing in the guest system, and the client on the host system. As such, its installation requires configuration on both the host and guest. The installation was also straight forward and the project’s documentation as well as the Arch Wiki VFIO article do a great job of explaining all the required steps.

Troubleshooting

As mentioned earlier, the PCI-passthrough setup process was pretty straight forward. However, I still had to deal with some slight issues which I would like to cover more in detail in this section.

Issue: Host unable to boot and stuck in black screen after enabling vfio

One issue I faced after binding VFIO drivers to the guest GPU is video output not being shown by my host system anymore (i.e. the host GPU). The system was seemingly getting stuck at boot. I am still unsure if I understood the cause of the issue correctly. The way I understand it, this is caused by my motherboard’s UEFI firmware wrongfully assuming that the guest GPU is to be used during boot. As such, the UEFI assigns a very simple driver efifb to the guest GPU in order to display splash screen and boot menus. The issue is that, the moment the boot process reaches the initramfs step, the vfio-pci stub driver is assigned to the guest GPU and no more video can be output through it. If you need a refresher about the boot process and UEFI firmware you can refer to my article about the topic.

One can blindly navigate the boot menu, until the system is booted and userspace is reached. However, in my case and because I want to view boot messages, this solution does not work for me. A possible solution to this issue would be to specify which GPU should be used during boot from within of the motherboard’s firmware settings menu. Sadly, that is not an option offered by my motherboard.

Another solution, is to simply unplug any cables connected to the guest GPU. This way, during boot, the UEFI detects that all ports of the guest GPU are disconnected, and thus uses the host GPU for booting. However, the issue this causes, is that the guest GPU needs to have one of its ports connected when the guest system is booting, in order for it to be recognized and initialized. This means that one would need to unplug the guest GPU during boot, and then re-plug it again before the guest system is needed. This obviously was not something I wanted to do every time I booted my system.

Thankfully, this issue is also covered in the troubleshooting section of the Arch Wiki article. The suggested solution is to disable the use of the efifb driver in the bootloader (grub) settings. This has the effect of video output not being shown only up until the point the initramfs is loaded by the bootloader, at which point the host GPU is used to show boot messages.

The next issue that arose after disabling efifb loading in the bootloader was that the Xorg server would fail to start, complaining about no screens being found. Here, the fix was to explicitly specify the GPU to use to Xorg using the host GPU’s PCI bus ID. This was achieved by creating a new file /etc/X11/xorg.conf.d/10-amd.conf with the following content:

1

2

3

4

5

Section "Device"

Identifier "AMD GPU"

Driver "amdgpu"

BusID "PCI:50:0:0"

EndSection

It is very important to note that the BusID specified in the Xorg configuration, needs to be given as colon-separated decimal values. In my case, my host GPU’s ID is 32:00.0 (see the IOMMU groups output from the IOMMU configuration section). This value is in hexadecimal and needs to be translated into decimal: 32 (hex) = 3*16 + 2 (dec)= 50 (dec), hence the value used in my Xorg configuration. Additionally, the original PCI ID uses a dot to separate the last value, it is important it be changed into a colon too.

The two modifications mentioned here work as a temporary fix, but I am not satisfied with not being able to see the early flash screen and menu during my system’s boot. Weirdly enough, this output is shown on a secondary monitor connected to my guest GPU, and I am not sure why. I would like to take a deeper look into this issue, perhaps in a later article.

Issue: GPU does not correctly reset on VM shutdown

This is the issue I have been most disappointed by. The AMD reset bug has been well documented on previous generations of AMD GPUs. I was aware this bug existed, but was under the impression that it had been solved for the RX6000 series of AMD card and later17. Sadly that is not the case.

The bug happens upon shutting down a guest system which makes use of a passed-through AMD GPU. For some reason, the GPU is not correctly reset, and cannot be used at the next guest system boot unless the host system is power cycled. Previously, it was required to completely reboot the host system. Thankfully, in my case a system suspension is sufficient to correctly reset the GPU, and for it to be used correctly on the next guest system boot.

The fix presented by the Arch Wiki article is to use a kernel module provided by the vendor reset project. However, as of the writing of this article, the project’s supported devices stop at the RX5000 series of GPUs and do not include the RX6000 generation, to which my GPU belongs. It does not seem that all RX6000 cards are affected by this issue, but that it is dependent on the specific models, for example cards from Sapphire seem to generally not be affected1718.

As it stands currently, I am still suffering from this issue, and need to suspend my system between every two boots of my guest Windows system. I would like to look more into it, and perhaps find a solution. A possible solution might be to use a different VBIOS version than the one my GPU currently uses, and which would allow my host system to correctly reset it. Such a VBIOS does not need to be flashed onto the GPU and can be given as an argument to QEMU. A database of VBIOS files for a large collection of GPUs is available on TechPowerUp.

Issue: No video output in Looking Glass

One final issue I faced after setting up Looking Glass was that, I still could not get any output on my client instance. In my case, this was due to the default CPU layout used by virt-manager/qemu: The default layout uses only one apparent CPU and this seemingly causes an issue for Looking Glass. A solution to this was to use the Manually set CPU Topology option in virt-manager, and to set the CPU count to match the number of vCPU assigned to the guest system (with one thread per vCPU).

Conclusions

My final guest system configuration is as follows:

- CPU: 10 vCPUs out of the 24 threads available on my 12 core CPU.

- Memory: 16GB out of the 64GB available on my system.

- Storage: For the boot drive I used a 100GB VirtIO disk image which can be expanded at a later date if necessary. Additionally, I used a PCI-passed-through 1TB M.2 disk just for Steam’s game storage. This layout will hopefully allow me to easily transfer my games library to another VM or another physical system, with as little hassle as possible.

- GPU: My passed-through AMD RX 6700XT.

All in all, I am very satisfied with my system. I have been wanting to try PCI-passthrough for gaming since the moment I first grasped its full potential a few years ago. The ability to have a completely virtualized last generation gaming system (at least mine was when I embarked on this journey) at one’s finger-tip truly is magical, and is a testament to the hard work of the free-software and open-source communities.

This computer has been my first build ever, and was built with PCI-passthrough as one of its main use-case. When compiling the parts list, I was very worried about later issues that could arise when it finally came time to configure the PCI-passthrough setup. I am happy that the hardware (CPU/motherboard/GPU) I picked successfully allowed for a working setup. In particular, the motherboard’s IOMMU grouping made for a very easy configuration. However, as I have highlighted, there are still some issues that I might not have faced, had I gone with different hardware. Specifically, the inability to set the boot GPU in the motherboard’s BIOS is bothersome, and the AMD reset-bug is a bit disappointing. I do hold high hopes to solve these issues in the future, and will keep you updated in case I do, so stay tuned for that. Until then however, I finally get to enjoy my new Windows gaming machine, all from the comfort of my Linux environment.

References

Red Hat Customer Portal: Virtualization Deployment and Administration Guide - Appendix E. Working with IOMMU Groups ↩︎ ↩︎2

Spaceinvader One (on YouTube): A little about Passthrough, PCIe, IOMMU Groups and breaking them up ↩︎

Alex Williamson - IOMMU Groups, inside and out (Archived on 06.02.2023) ↩︎

Alex Williamson - VFIO GPU How To series, part 3 - Host configuration (Archived on 25.02.2023 ↩︎ ↩︎2

Alex Williamson - KVM Forum 2016: An Introduction to PCI Device Assignment with VFIO (Archived on 31.01.2022) ↩︎ ↩︎2

Red Hat Customer Portal: Virtualization Getting Started Guide - 3.4. Virtualized Hardware Devices ↩︎ ↩︎2

RedHat (July 2014): Open Virtual Machine Firmware (OVMF) Status Report (Archived on 06.03.2023) ↩︎

Pavol Elsig (on YouTube): Adding VirtIO and passthrough storage in Virtual Machine Manager ↩︎

The Fedora Project User Documentation: Creating Windows virtual machines using virtIO drivers ↩︎

Level1Linux (on YouTube): New Tech for IOMMU Users: Looking Glass (Headless Passthrough) ↩︎

Looking Glass doc: Installation # Keyboard/mouse/display/audio ↩︎

Level1Linux (on YouTube): Radeon 6800 (XT): Are they the best Linux Gaming Cards (that you can’t buy)? ↩︎ ↩︎2

Level1Techs Forum: 6700XT - reset bug? (Archived on 10.04.2023) ↩︎